Psychoacoustics: How Your Brain Influence's Your Mix

Have you heard the term ‘psychoacoustics’ but have no idea what it means? If so, you are not alone, as this is an important concept that is not all that well understood in the music production community.

It is nonetheless worth taking the time to understand what psychoacoustics is, as it has an affect on how we perceive music every time we listen to it.

This subject, therefore of fundamental importance to processes like mixing and mastering. Below, we will explain the concept and relate it to common mix processes in a way that will help you to consider psychoacoustics when you mix.

What Is Psychoacoustics?

Psychoacoustics is the study of the way that humans perceive sound. We can broadly split the subject of psychoacoustics into two main areas; ‘perception’ and ‘cognition’. Perception relates to the physical processes that occur in our bodies when we hear sound. Cognition relates to the way that our brains interpret the sounds that we hear. Understanding both of these areas can help us a great deal when it comes to mixing.

The Human Hearing Range

It’s quite common knowledge that the range of human hearing stretches from 20Hz at the bottom end to a maximum of 20,000Hz (or 20kHz) at the top end. In many adults, however, hearing tops out at 16 or 17kHz as our auditory systems deteriorate over time. Less widely known is the fact that we do not hear all frequencies equally across this spectrum. Our ears are actually designed to privilege certain frequencies over others.

Equal-Loudness Contours

The physical construction of our ears is such that some frequencies appear more prominent than others. When sound enters our ears, it travels through a spiral shaped cavity called the cochlea. Sound waves stimulate tiny little hair cells called stereocilia that line the cochlea, and this stimulation causes electrical signals to be sent to our brains – these signals are then interpreted as sound.

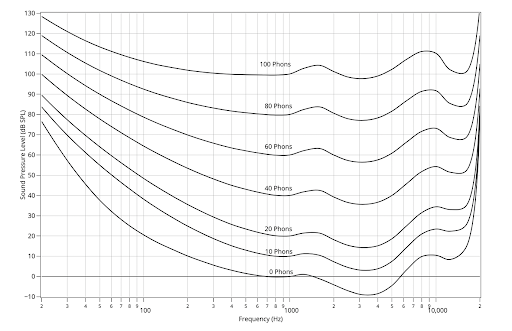

These tiny hair cells are not spread evenly through the cochlea. However, they are concentrated in certain areas where they process the sounds in our environments that are most useful to us. For example, we receive a boost in the upper-mid range – an area that is key when deciphering speech. You can see these variations on diagrams called ‘equal-loudness contours, such as the one pictured below. Each point along the curve will sound like it has the same loudness as every other point, despite the differing sound pressure levels.

Localisation and the Phantom Image

Your auditory system determines how you locate sounds around you in space. This is called ‘localization’. There are several different factors that play into us figuring out where a specific sound is coming from. Firstly, there is the position of our ears. Our ears are on either side of our head, meaning that there is, of course, a small distance between them. That means that the sound coming to us from the left will reach our left ear first, while the sound coming from the right will reach our right ear first.

Our brains use this information, as well as more complex cues that take into account the shape of our ears and our heads, to decode where the soundwaves we are hearing are coming from.

We can use these systems, and in some ways trick them, to create a stereo image in our music. If you play the same sound at the same volume through two identical speakers, then we perceive that the sound is coming from the mid-point in space between the two speakers.

This is called the ‘phantom image’ and it is the foundation for the stereo effect. As we raise and lower the volume of individual mix elements in each speaker, we trick our brains into believing that the sound is moving in space between one speaker and the other. In this manner, we can use panning to create nice, wide stereo images.

Frequency Masking

Frequency masking is one of the main challenges we must overcome when mixing music. This is the phenomenon of two instruments obscuring each other in the mix when their frequency ranges are too similar. The reasons for this become clear when we consider the physiology of the ear once again.

Although the two instruments in question might be positioned in different places, when the sound waves reach our ears, the sounds of both sound sources stimulate the same areas in the cochlea. If the areas excited by the two sounds are very close together then we can struggle to tell one sound from the other.

This problem can be fixed through EQing; we can sculpt sounds so that each musical part sits in its own place on the frequency spectrum. This is in fact one of the main reasons why EQ is such an important tool when it comes to mixing music.

The Missing Fundamental

Sometimes also known as the ‘phantom fundamental’, this is another fascinating phenomenon that can be used to trick our ears into hearing something that simply isn’t there! To explain this concept properly, we first need to define harmonics. If you play a piano at 100Hz, the sound that is generated is incredibly complicated; containing a large range of frequencies. Try listening to a 100Hz sinewave – it is a comparatively very simple sound as we only hear that ‘fundamental’ 100Hz tone. On a piano, we get that fundamental tone at 100Hz, but we also get multi-layered ‘harmonic frequencies’ – or ‘overtones’ – at regular multiples of that original 100Hz on the frequency spectrum. We hear this as a single cohesive sound however – a piano note – rather than noticing the individual frequencies themselves.

Things get really interesting when we remove the fundamental tone, but retain the harmonics. If we do this we still ‘hear’ the fundamental, even though it’s not there! Our brains hear the harmonic overtones and extrapolate the missing fundamental from them so that we still perceive the original pitch. This can be important in certain scenarios – for example, perhaps hearing a low bass sound through laptop speakers.

We can use our knowledge here by applying an effect like saturation on a sub-bass part – if we create harmonic overtones with this effect, we can make this part audible on smaller systems that cannot reproduce the lowest of frequencies.

Psychoacoustics is a fascinating subject. It can help us to have a greater understanding of the audio processes that we are using in our workflows on a daily basis. Now that you have an understanding of some of the key concepts of psychoacoustics try to use them to think more deeply about mix decisions that you make in future.

Comments:

Dec 21, 2022

Dec 21, 2022

Dec 21, 2022

Login to comment on this post